Databricks

Supported Databricks Runtime versions: 12.2 - 17.3

❗ Note: Databricks Serverless is not supported for this instrumentation. You may optionally use the DBT agent instead.

Compatibility Matrix

| Databricks Release | Spark Version | Scala Version | Definity Agent |

|---|---|---|---|

| 17.3_LTS (scala 2.13) | 4.0.0 | 2.13 | 4.0_2.13-latest |

| 16.4_LTS (scala 2.13) | 3.5.2 | 2.13 | 3.5_2.13-latest |

| 16.4_LTS (scala 2.12) | 3.5.2 | 2.12 | 3.5_2.12-latest |

| 15.4_LTS | 3.5.0 | 2.12 | 3.5_2.12-latest |

| 14.3_LTS | 3.5.0 | 2.12 | 3.5_2.12-latest |

| 13.3_LTS | 3.4.1 | 2.12 | 3.4_2.12-latest |

| 12.2_LTS | 3.3.2 | 2.12 | 3.3_2.12-latest |

Setup

TL;DR

Add an init script to your cluster to automatically configure the Definity agent. The script will:

- Automatically detect your Spark and Scala versions

- Download the appropriate Definity Spark agent

- Configure the Definity plugin with default settings

- If configuration fails, the cluster will continue to start normally

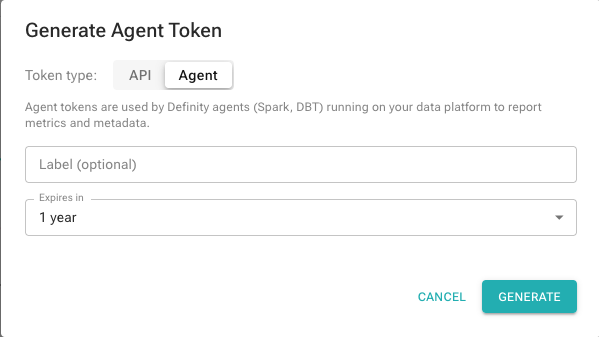

1. Generate an Agent Token in Definity

In the Definity app, click your user avatar (top right) and select Generate Token.

Select the Agent token type, optionally add a label, and click Generate.

Copy the token — you'll need it in the next step.

For a quick evaluation, skip to Step 4 — just set your agent token in the script.

2. Store Your Agent Token as a Databricks Secret

Use the Databricks CLI to create a secret scope and store your Definity agent token:

databricks secrets create-scope definity

databricks secrets put-secret definity agent-token --string-value "<YOUR_AGENT_TOKEN>"

Then add the following to your cluster's Environment Variables (Cluster configuration → Advanced options → Spark tab → Environment Variables):

DEFINITY_API_TOKEN={{secrets/definity/agent-token}}

This makes the token available to the init script at runtime without hardcoding it. See Databricks documentation for more details.

3. Upload the Agent JARs

Download the agent JARs for the Spark/Scala versions you use (see Compatibility Matrix) and upload them to a location accessible from your cluster. The init script auto-detects the Spark and Scala version at startup and fetches the matching JAR.

Supported storage options:

| Storage | ARTIFACT_BASE_PATH example | Notes |

|---|---|---|

| Volumes | "/Volumes/<catalog>/<schema>/definity_jars" | Init script must also be stored in a Volume |

| HTTP/HTTPS | "https://your-artifactory.com/repo/libs-release" | Artifactory, Nexus, or any HTTP server |

| S3 | "s3://your-bucket/definity" | Cluster needs an instance profile or IAM role with access |

Note: When storing the agent JAR in a Unity Catalog Volume, the init script must also be stored in a Volume. Volume credentials are only available to Volume-stored init scripts — see Databricks docs.

# Create a Volume (one-time setup)

databricks volumes create <catalog> <schema> definity_jars MANAGED

# Upload the agent JAR

databricks fs cp definity-spark-agent-3.5_2.12-latest.jar \

dbfs:/Volumes/<catalog>/<schema>/definity_jars/definity-spark-agent-3.5_2.12-latest.jar

# Upload the init script (see step 3)

databricks fs cp databricks_definity_init.sh \

dbfs:/Volumes/<catalog>/<schema>/definity_jars/databricks_definity_init.sh

4. Create an Init Script

Copy the script below and set DEFINITY_API_TOKEN to the token you generated in Step 1. For production use, also update ARTIFACT_BASE_PATH to point to your own artifact repository (see Step 3):

databricks_definity_init.sh

#!/bin/bash

# ============================================================================

# Definity Agent Configuration for Databricks

# Tested Databricks Runtimes: 12.2 LTS - 17.3 LTS (Spark 3.3 - 4.0)

# ============================================================================

# This script automatically detects your Spark and Scala versions and

# installs the appropriate Definity Spark Agent.

#

# If installation fails, the cluster will start normally without the agent.

# ============================================================================

# ============================================================================

# CONFIGURATION

# ============================================================================

# Base path to the agent JARs.

# The script auto-detects Spark/Scala and appends the JAR filename, e.g.:

# {base_path}/definity-spark-agent-3.5_2.12-0.80.2.jar

#

# IMPORTANT: For production use, upload the agent JAR to your own

# The definity.run URL shown here is for demonstration purposes only.

# artifact repository (Artifactory, Nexus, S3, etc.) and update this URL. For example:

# Volumes : "/Volumes/<catalog>/<schema>/<volume>"

# HTTP/HTTPS : "https://your-artifactory.company.com/repository/libs-release"

# S3 : "s3://your-bucket/definity"

# DBFS : "/dbfs/FileStore/jars" (legacy)

ARTIFACT_BASE_PATH="https://user:[email protected]/java"

# Version of the Definity agent (e.g. "0.80.2")

DEFINITY_AGENT_VERSION="latest"

# Definity agent token. We recommend fetching this from Databricks Secrets

# via a cluster environment variable rather than hardcoding it here.

# See docs for setup instructions.

DEFINITY_API_TOKEN="${DEFINITY_API_TOKEN:-<YOUR_AGENT_TOKEN>}"

# ============================================================================

# AUTO-DETECTION AND INSTALLATION

# ============================================================================

JAR_DIR="/databricks/jars"

mkdir -p "$JAR_DIR"

# Extract Spark version from /databricks/spark/VERSION

FULL_SPARK_VERSION=$(cat /databricks/spark/VERSION)

SPARK_VERSION=$(echo "$FULL_SPARK_VERSION" | grep -oE '^[0-9]+\.[0-9]+')

echo "Detected Spark version: $SPARK_VERSION"

if [ -z "$SPARK_VERSION" ]; then

echo "Spark major.minor version is empty or not found. Will not proceed to install definity agent"

exit 0

fi

# Extract Scala version from /databricks/IMAGE_KEY

DBR_VERSION=$(cat /databricks/IMAGE_KEY)

SCALA_VERSION=$(echo "$DBR_VERSION" | grep -oE 'scala([0-9]+\.[0-9]+)' | sed 's/scala//')

echo "Detected Scala version: $SCALA_VERSION"

if [ -z "$SCALA_VERSION" ]; then

echo "Scala version is empty or not found. Will not proceed to install definity agent"

exit 0

fi

# Build agent version string with Spark and Scala versions

SPARK_AGENT_VERSION="${SPARK_VERSION}_${SCALA_VERSION}"

# Build the full agent version string

FULL_AGENT_VERSION="${SPARK_AGENT_VERSION}-${DEFINITY_AGENT_VERSION}"

# Fetch the agent JAR

AGENT_JAR_NAME="definity-spark-agent-${FULL_AGENT_VERSION}.jar"

AGENT_JAR_SRC="${ARTIFACT_BASE_PATH}/${AGENT_JAR_NAME}"

echo "Fetching Definity Spark Agent ${FULL_AGENT_VERSION} from ${AGENT_JAR_SRC} ..."

if [[ "$ARTIFACT_BASE_PATH" == s3://* ]]; then

aws s3 cp "$AGENT_JAR_SRC" "$JAR_DIR/definity-spark-agent.jar"

elif [[ "$ARTIFACT_BASE_PATH" == /Volumes/* ]] || [[ "$ARTIFACT_BASE_PATH" == /dbfs/* ]]; then

cp "$AGENT_JAR_SRC" "$JAR_DIR/definity-spark-agent.jar"

else

curl -f -o "$JAR_DIR/definity-spark-agent.jar" "$AGENT_JAR_SRC"

fi

if [ $? -ne 0 ]; then

echo "Failed to fetch Definity Spark Agent from: $AGENT_JAR_SRC"

echo "Cluster will start without Definity agent"

exit 0

fi

echo "Successfully installed Definity Spark Agent"

# Configure Definity plugin

cat > /databricks/driver/conf/00-definity.conf << EOF

spark.plugins=ai.definity.spark.plugin.DefinitySparkPlugin

spark.definity.server="https://app.definity.run"

spark.definity.api.token="$DEFINITY_API_TOKEN"

EOF

echo "Definity Spark Agent configured successfully"

5. Attach the Init Script to Your Compute Cluster

In the Databricks UI:

- Go to Cluster configuration → Advanced options → Init Scripts.

- Add your script with the appropriate source and path (e.g. Volumes, S3, or Workspace).

When using Volumes, set the path to /Volumes/<catalog>/<schema>/<volume>/databricks_definity_init.sh.

6. Configure Cluster Name [Optional]

By default, the cluster name is derived from the Databricks cluster name. To customize it, navigate to Cluster configuration → Advanced options → Spark and add:

spark.definity.compute.name my_cluster_name

Entity Mapping

Definity follows the same pipeline hierarchy used by orchestrators like Apache Airflow. The table below shows how these entities map to their Databricks and Spark equivalents.

| Definity | Databricks | Spark | Notes |

|---|---|---|---|

| Pipeline | Workflow (Job) | — | A logical grouping of Tasks, typically orchestrated on a schedule. |

| Pipeline Run | Job Run | — | A single execution of a Pipeline. All Task Runs sharing the same pipeline.pit or pipeline.run.id belong to the same run. |

| Task | Job Task | Application (SparkContext) | The definition of a unit of compute work. In Databricks multi-task workflows, each job task maps to a Definity Task. In standalone Spark, one SparkSession = one Task. |

| Task Run | Job Task Run | Application Run | A single execution of a Task — surfaced as the Task Run page in the UI and the task_run REST API. |

| Transformation (TF) | Notebook cell / SQL statement | Job (action-triggered) | A single logical operation within a Task Run — e.g. one SQL query, one DataFrame.write(), or one Spark action. |

| Dataset | Delta Table / Unity Catalog table / file path | DataFrame / Table | A data artifact read or written by a Transformation. Definity tracks both inputs and outputs for lineage. |

| PIT (Point In Time) | Scheduled time / logical date | — | The logical time of the data being processed. Equivalent to the execution date in Airflow or the partition date of a time-partitioned table. |

| Compute | Cluster (Job Cluster / All-Purpose Cluster) | SparkSession / SparkContext | The infrastructure running the tasks. Tracked separately in shared compute mode. |

Example: Databricks Multi-Task Workflow

Databricks Workflow "payments-etl" → Definity Pipeline "payments-etl"

└─ Job Run #1234 (2025-05-01) → Pipeline Run (pit=2025-05-01)

├─ Job Task "ingest" → Task "ingest"

│ └─ Job Task Run → Task Run (pit=2025-05-01)

│ ├─ SQL: INSERT INTO payments… → Transformation

│ ├─ Reads: raw_payments → Dataset (input)

│ └─ Writes: stg_payments → Dataset (output)

└─ Job Task "transform" → Task "transform"

└─ Job Task Run → Task Run (pit=2025-05-01)

├─ SQL: CREATE TABLE … → Transformation

└─ Writes: payments_final → Dataset (output)

Advanced Tracking Modes

The default Databricks integration tracks the compute cluster separately from workflows and automatically detects running workflow tasks. You may want to change this behavior in these scenarios:

Single-Task Cluster

If you have a dedicated cluster per task, disable shared cluster tracking mode and provide the Pipeline Tracking Parameters in the init script:

spark.definity.sharedCompute=false

Manual Task Tracking

To manually control task scopes programmatically, disable Databricks automatic tracking:

spark.definity.databricks.automaticSessions.enabled=false

Then follow the Multi-Task Shared Spark App guide.